The immediate story was easy to misunderstand. On April 1-2 2026, Anthropic confirmed that a Claude Code release packaging issue was caused by human error, not a security breach. Anthropic also said no customer data or credentials were exposed.

That matters. But the more important business lesson sits elsewhere. AI coding tools are often bought like harmless developer productivity add-ons because the demo looks simple: generate code, explain code, fix a bug, write a test. Under the hood, many of these products behave much more like enterprise runtime platforms. They can read repositories, execute actions, call remote services, apply policies, collect telemetry, and operate through background agents with varying approval boundaries.

For CIOs, IT Directors, and CTOs, that changes the buying conversation. The cheapest tool with the best demo is not automatically the safest operating model.

What Actually Happened With Claude Code

The reported issue centred on the since-deleted Claude Code 2.1.88 npm release. That package reportedly included a 59.8MB file or source map that exposed internal source references. Multiple reports say the exposed archive contained about 1,900 TypeScript files and more than 500,000 lines of code.

The follow-on response also drew attention. GitHub initially processed a DMCA notice against an entire network of roughly 8.1K repositories, then Anthropic partially retracted that request to the parent repository plus 96 forks.

Based on media reports and source analysis, the exposed code appeared to reveal hidden or experimental capabilities and gave outsiders a clearer view into the product’s system access, telemetry design, policy controls, and background-agent architecture. That is why this story matters beyond one packaging failure.

It is important to stay precise here. This was not a report of customer records being stolen. Anthropic says it was not a breach. The governance lesson comes from what the exposed material showed about how modern AI coding tools are designed and operated.

Why This Is a Governance Story Rather Than a Breach Story

In many organisations, AI coding tools are still evaluated as if they were advanced autocomplete. That framing is now too narrow.

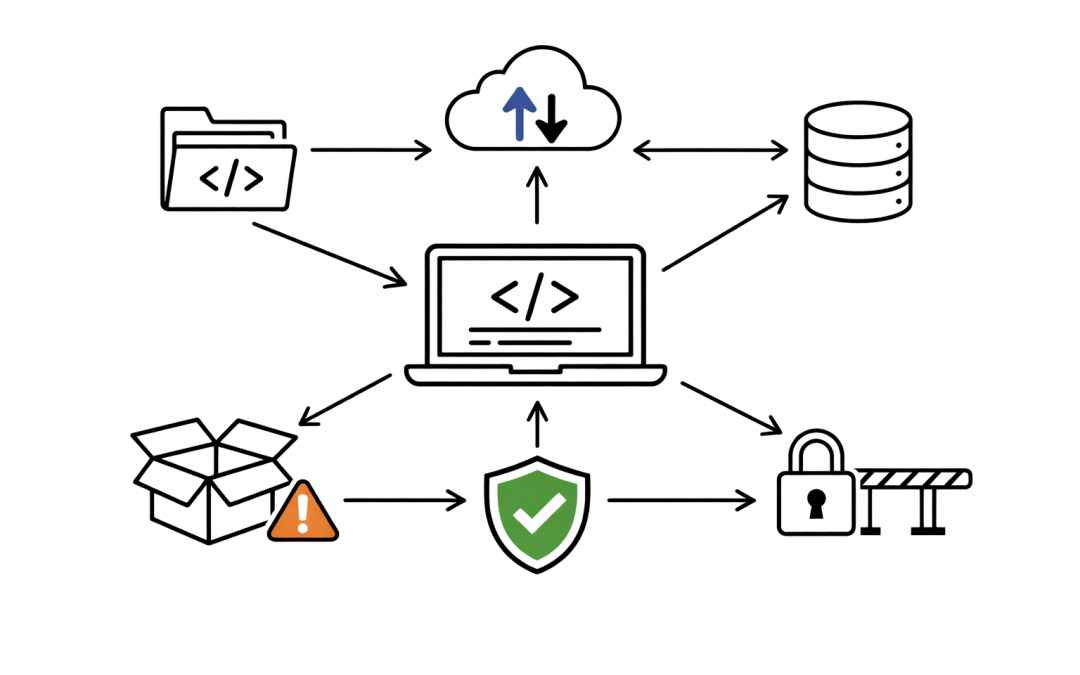

A modern coding assistant can touch source code, prompts, model outputs, terminal commands, background tasks, policy decisions, and sometimes external providers. Once a tool can inspect a codebase, call models over the network, take action inside development workflows, and update its own behaviour through vendor-controlled services, it stops being just a developer convenience feature.

It becomes part of the organisation’s runtime and governance surface.

That is the shift this incident should force. The question is not whether Claude Code is uniquely bad. It is not. The point is that the category itself has matured into something that needs the same scrutiny enterprises already apply to cloud workloads, endpoint management tools, SaaS platforms, and software supply chain dependencies.

The Procurement Questions Just Changed

When an AI coding platform is evaluated like a plugin, the shortlist usually gets driven by demo quality, price, and developer enthusiasm. When it is evaluated like an enterprise runtime platform, the questions become much more operational.

Technology leaders now need direct answers on data flows. What leaves the developer workstation? What gets sent to the vendor? What can be routed to another model provider? What metadata is collected even when no code is deliberately pasted into a prompt?

They also need clarity on retention. Anthropic’s own Claude Code documentation says prompts and model outputs are sent over the network for first-party usage. It also says commercial users generally get 30-day retention, while enterprise zero-data-retention is available. That is not a minor settings detail. It directly affects legal review, regulated workloads, and internal policy decisions.

Provider routing matters as well. Anthropic’s documentation says telemetry, error reporting, and feedback are off by default when Claude Code is used through Bedrock, Vertex, and Foundry, but on by default in first-party usage unless disabled. In plain English, the same coding tool can behave differently depending on whether the organisation consumes it directly from the model vendor or through a cloud platform such as AWS, Google Cloud, or Microsoft.

That changes procurement. A product comparison that ignores routing path, retention defaults, and telemetry defaults is no longer a serious evaluation.

Why Telemetry, Remote Control, and Update Paths Matter More Than Most Buyers Realise

This is where many buying processes still fall short.

An AI coding tool may look local because a developer sees it inside an IDE or terminal. The operating model may still depend on vendor-managed services for prompts, outputs, feature flags, policy checks, model selection, updates, telemetry, and approvals. In practice, that means the real control plane may sit outside the organisation’s boundary.

That has several consequences.

Default telemetry settings matter because code-adjacent context can reveal architecture, project names, internal APIs, error traces, and business logic even when no obvious secret is exposed. Remote policy controls matter because they can change what the tool is allowed to do, how it requests approval, and how it routes actions without the organisation rebuilding the product itself.

Update mechanisms matter because a packaging mistake, a silent feature change, or a new execution capability can materially alter risk. Background-agent design matters because the more work the tool performs asynchronously, the more important it becomes to define what can run automatically, what must be approved, and what must never cross an environment boundary.

That is why the safest question is no longer “does this tool write good code?” It is “what operating model are we importing into our engineering environment?”

Execution Permissions and Approval Boundaries Are Now Board-Level Concerns

The exposed Claude Code material reportedly showed how much system access and background-agent design existed in the product. That should not be treated as shocking in itself. Most useful AI coding platforms are moving in this direction because simple chat experiences do not deliver enough value on their own.

The business risk appears when organisations adopt those capabilities without matching governance.

If an agent can read files, open terminals, modify code, call services, or keep working in the background, leaders need hard boundaries around what is allowed locally, what is vendor-managed, and what requires human approval every time. They also need to know whether approvals are enforced in a way the organisation controls or in a way the vendor defines.

For regulated environments, these are not abstract concerns. They affect change control, segregation of duties, auditability, incident response, and software supply chain assurance. Boards are already asking harder questions about third-party code, update channels, and data handling. AI coding tools now belong inside that same conversation.

Why This Matters in Australia

Australian organisations are operating in a stricter governance environment than many vendor demos acknowledge.

Essential 8 has already pushed many businesses to mature application control, patching, administrative privilege, and macro or script governance. Those disciplines are directly relevant to AI coding tools. A product that can execute commands, move code, or operate through background tasks should be reviewed through the same lens as other privileged tooling, not treated as a low-risk assistant.

There is also a broader data governance issue. Mid-market organisations in healthcare, financial services, education, legal, government-adjacent sectors, and critical supply chains increasingly need to explain where sensitive data goes, how long it is retained, and who can influence the systems acting on it. Australian privacy obligations, customer commitments, and board expectations do not disappear because a tool is marketed as a developer productivity feature.

For many local organisations, the most defensible model may not be the cheapest direct subscription. It may be a more controlled path through a cloud provider or enterprise platform where retention, telemetry, identity integration, logging, and policy settings better align to existing governance.

The New Business Case for AI Coding Tools

The old business case was simple. Buy the tool that gives developers the biggest productivity lift at the lowest visible cost.

That logic is now incomplete. The real cost of an AI coding platform includes its data handling model, its telemetry defaults, its policy architecture, its update mechanism, its execution boundary, and the amount of vendor-managed behaviour it introduces into the development environment.

In other words, the best demo is not necessarily the best operating model.

The stronger business case is to treat AI coding tools like enterprise platforms from day one. That means evaluating local versus vendor-managed execution, confirming retention and telemetry defaults, understanding provider routing options, constraining permissions, and defining approval boundaries before rollout expands.

Teams that do this well will still capture productivity gains. They will just do it without introducing unnecessary governance debt.

For Australian mid-market organisations, that is the real lesson from the Claude Code incident. Not panic. Not vendor bashing. Just a more mature standard for how these tools are bought and governed.

If your organisation is reviewing AI coding platforms and wants a clearer view of the security, governance, and operating-model trade-offs before standardising on one, our team can help assess the options in practical terms.