Anthropic just revealed the architecture behind Claude Managed Agents. For any organisation deploying AI agents in production, the engineering decisions they made carry real implications for governance, security, and vendor risk.

Here is what Australian IT leaders need to understand — and what questions they should be asking right now.

AI Agents Are Moving From Experiments to Infrastructure

The conversation around AI agents has shifted. They are no longer chatbots answering questions. They are autonomous systems performing multi-step work across codebases, cloud environments, and business processes.

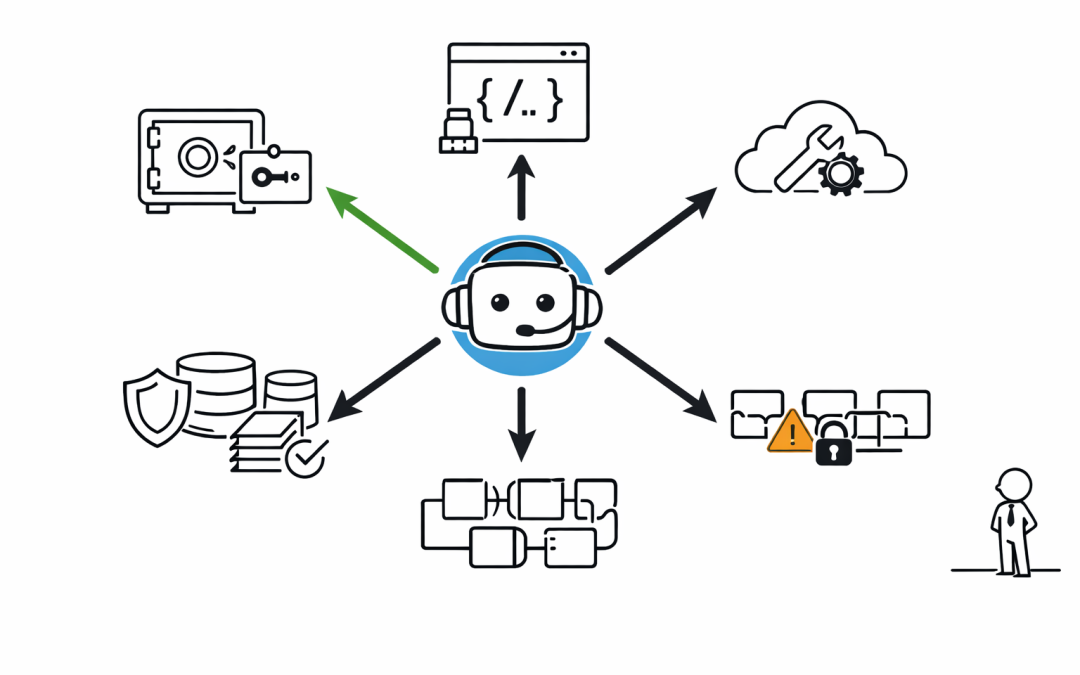

Anthropic’s Managed Agents service is designed for exactly this: long-horizon tasks where Claude operates independently over extended periods. The architecture decouples the “brain” (Claude and its orchestration harness) from the “hands” (sandboxes and tools that perform actions) and the “session” (a durable log of everything that happened).

This is not a minor technical detail. It is a fundamental design choice that determines how enterprises can govern, audit, and secure their AI agent deployments.

The Security Architecture Matters More Than the Model

One of the most significant decisions in Managed Agents is how credentials are handled. In Anthropic’s earlier architecture, the AI model, its orchestration layer, and the execution sandbox all lived in the same container. That meant a prompt injection attack only needed to convince Claude to read its own environment variables to access credentials.

The new architecture eliminates this risk structurally. Credentials never enter the sandbox where Claude’s generated code runs. Git access tokens are bundled into the repository during initialisation — push and pull work without the agent ever handling the token. For external tools connected via MCP (Model Context Protocol), OAuth tokens sit in a secure vault. Claude calls tools through a dedicated proxy that retrieves credentials independently.

For organisations operating under the Essential Eight or ACSC guidelines, this separation of credential management from execution environments is exactly the kind of control that security teams should be demanding from any AI vendor.

Governance Needs a Durable Audit Trail

Managed Agents introduces a session log that sits outside Claude’s context window. Every event — every tool call, every decision, every output — gets recorded in a durable, append-only log.

This is critical for governance. When an AI agent performs work autonomously over hours or days, organisations need a complete trail of what happened and why. The session log provides that. It is not just a debugging tool. It is an audit record that compliance teams can interrogate independently of the AI model itself.

Australian organisations subject to privacy legislation, financial services regulations, or government procurement standards should view this as table stakes. Any AI agent platform that cannot produce an immutable, complete audit trail of agent activity is a governance gap waiting to be exploited.

Vendor Lock-In Risk Is Evolving

Anthropic describes Managed Agents as a “meta-harness” — a system designed to be unopinionated about the specific orchestration harness that runs Claude. The architecture supports swapping components without disturbing others. Different harnesses, different sandboxes, different tools — all interchangeable behind stable interfaces.

This sounds like flexibility. But it also introduces a new form of vendor dependency.

When an organisation builds its agent workflows on top of Managed Agents, it is committing to Anthropic’s interface contracts, their session management model, and their security architecture. The portability of the underlying model matters less if the orchestration layer becomes the real lock-in point.

IT leaders should be asking: if we need to move to a different AI vendor in eighteen months, what is the migration path? Can we export session logs? Are the tool interfaces standard enough to work with other providers? What happens to our audit data if we leave?

The Australian Context Adds Another Layer

Anthropic recently signed a Memorandum of Understanding with the Australian government on AI safety and research. They are opening a Sydney office and investing in partnerships with Australian research institutions including ANU, Garvan Institute, and Murdoch Children’s Research Institute.

This is positive for Australian enterprises. It signals commitment to the local market and alignment with Australia’s National AI Plan. But it does not eliminate the need for independent due diligence.

The MOU covers safety research and economic impact tracking. It does not guarantee data residency, sovereign hosting, or compliance with specific industry regulations. Organisations in healthcare, financial services, or government need to dig deeper than the press release.

What This Means for Enterprise AI Strategy

Claude Managed Agents represents a maturing of the AI agent market. The architecture is thoughtful. The security model is genuinely improved. The audit capabilities are a step forward.

But maturity brings new questions. Here is what we recommend organisations evaluate:

Security architecture. Does the AI agent platform structurally separate credentials from the execution environment? Or does it rely on policy-based controls that a sufficiently clever prompt injection could bypass?

Audit completeness. Can the platform produce a full, immutable record of every action the agent took? Can that record be exported and stored in your own systems?

Vendor portability. Are the interfaces open enough to migrate to another provider? What is the cost of switching if the vendor changes pricing, terms, or architecture?

Data sovereignty. Where does agent processing occur? Where are session logs stored? Does the platform meet Australian data residency requirements for your industry?

Governance integration. Can the platform integrate with your existing GRC (governance, risk, and compliance) tooling? Or does it create a parallel governance silo?

The Bottom Line

AI agents are becoming infrastructure. And like all infrastructure, they need to be governed, audited, and evaluated for vendor risk — not just admired for their capabilities.

Claude Managed Agents is one of the more transparent examples of how AI vendors are thinking about these problems. That transparency is valuable. But transparency from the vendor does not replace rigorous evaluation from the buyer.

The organisations that get this right will be the ones asking hard questions now — before autonomous AI agents are embedded so deeply in their operations that switching or governing them becomes an afterthought.